Massive AWS outage mostly over, but some problems linger

Massive AWS outage mostly over, but some bug linger

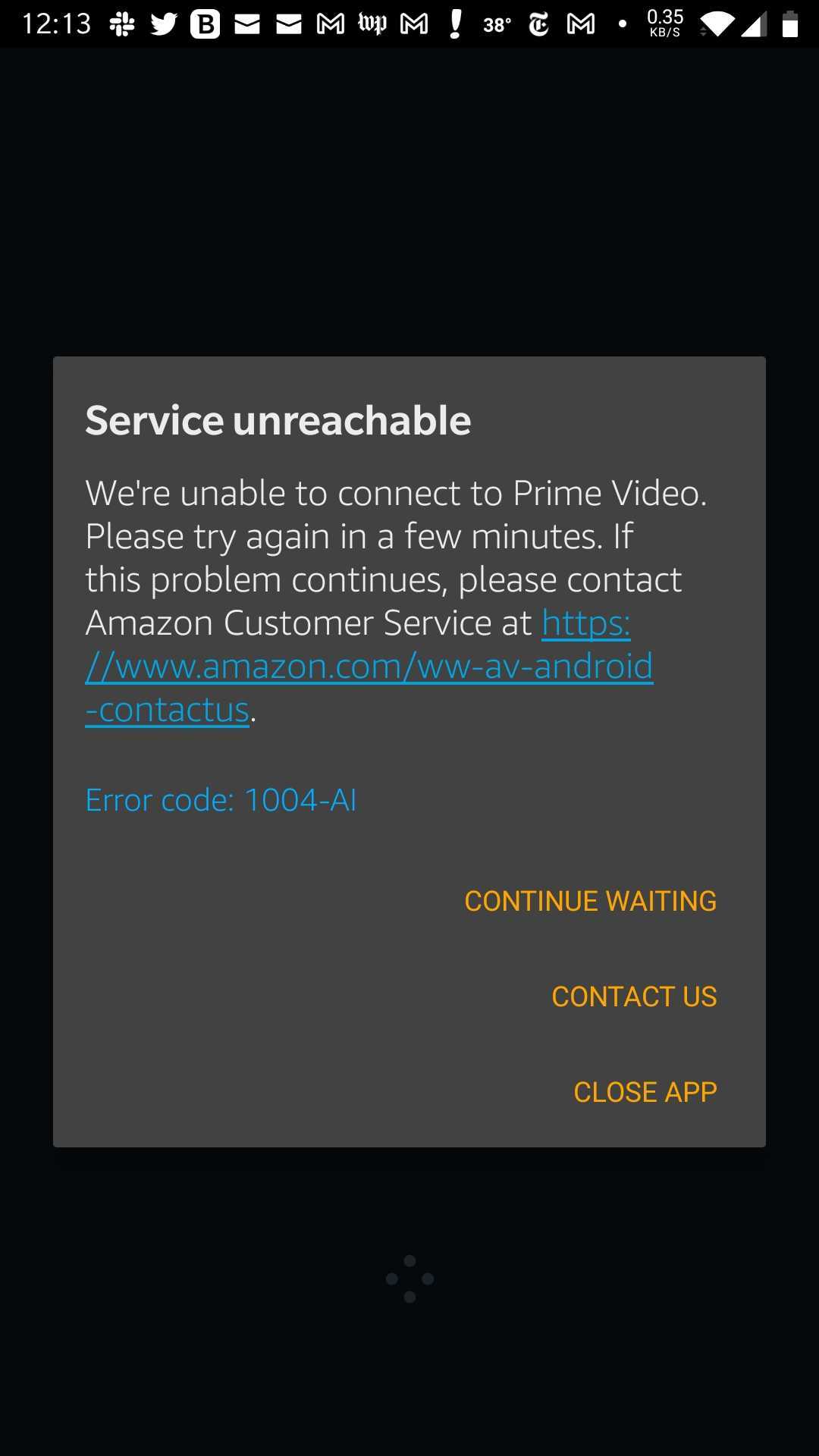

Amazon Spider web Services, the server organization that hosts 32% of the net according to Statista, has been hit with a major outage today (Dec. 7) affecting everything from Alexa, Prime number Video and Ring security cameras to Disney Plus and League of Legends. Per a report past Bloomberg, it seems that Amazon delivery drivers are as well existence affected by the outage, affecting drop-off times. And according to users on the r/sysadmin subreddit, everything is existence affected, from schoolhouse systems to warehouses.

As of six:xxx p.thou. ET, much of the bug that caused service shutdown have been migrated. Amazon claims that all services are independently working, and that AWS is still pushing towards full recovery. Certain services such every bit SSO, Connect, API Gateway, ECS/Fargate and Eventbridge are even so running into problems, however.

Down Detector, a website that tracks real-time outage data, shows AWS reporting significant problems. Every bit of writing, hither are all the services that are downward or experiencing some problems:

- Disney Plus

- Prime number Video

- Alexa

- Ring

- Tinder

- Canva

- League of Legends

- League of Legends: Wild Rift

- Valorant

- PUBG

- Dead by Daylight

- Clash of Clans

- Duolingo

- Pluto TV

The Amazon Spider web Services Twitter account has not all the same given an update regarding this situation. But the official AWS Service Wellness Dashboard does have up-to-date intel on what's happening.

At half-dozen:03 p.one thousand. ET, AWS said that many services have now recovered. Only certain services are yet being impacted.

In an earlier message, information technology stated that there is a problem in the US-East-1 Region, hosted in Virginia, merely that a fix is existence worked on:

"We are seeing impact to multiple AWS APIs in the U.s.a.-EAST-1 Region. This issue is also affecting some of our monitoring and incident response tooling, which is delaying our ability to provide updates. We have identified the root cause and are actively working towards recovery."

That message was updated at around 1.15 p.one thousand. ET to add that "We have identified root cause of the issue causing service API and console issues in the US-E-1 Region, and are starting to come across some signs recovery. We do not have an ETA for full recovery at this time."

Below nosotros've listed all the previous prompts in chronological order. All times are in PST.

[8:22 AM PST] We are investigating increased mistake rates for the AWS Management Console.

[8:26 AM PST] We are experiencing API and console issues in the United states-Eastward-1 Region. We have identified root crusade and we are actively working towards recovery. This outcome is affecting the global console landing page, which is likewise hosted in US-Eastward-1. Customers may be able to access region-specific consoles going to https://panel.aws.amazon.com/. And so, to access the U.s.a.-W-ii console, effort https://us-west-2.console.aws.amazon.com/

[8:49 AM PST] We are experiencing elevated error rates for EC2 APIs in the US-E-1 region. Nosotros accept identified root cause and we are actively working towards recovery.

[eight:53 AM PST] We are experiencing degraded Contact handling by agents in the US-East-one Region.

[8:57 AM PST] We are currently investigating increased error rates with DynamoDB Control Plane APIs, including the Backup and Restore APIs in The states-EAST-1 Region.

[9:08 AM PST] We are experiencing degraded Contact handling past agents in the U.s.a.-Due east-ane Region. Agents may feel problems logging in or being connected with end-customers.

[9:xviii AM PST] We can confirm degraded Contact handling by agents in the US-EAST-i Region. Agents may experience issues logging in or being continued with end-customers.

[9:37 AM PST] Nosotros are seeing impact to multiple AWS APIs in the U.s.a.-EAST-one Region. This upshot is also affecting some of our monitoring and incident response tooling, which is delaying our ability to provide updates. We accept identified the root crusade and are actively working towards recovery.

[10:12 AM PST] Nosotros are seeing impact to multiple AWS APIs in the US-E-1 Region. This issue is also affecting some of our monitoring and incident response tooling, which is delaying our power to provide updates. Nosotros take identified root cause of the result causing service API and console problems in the US-EAST-1 Region, and are starting to run into some signs of recovery. We exercise not have an ETA for full recovery at this time.

[11:26 AM PST] Nosotros are seeing touch to multiple AWS APIs in the US-EAST-1 Region. This issue is also affecting some of our monitoring and incident response tooling, which is delaying our ability to provide updates. Services impacted include: EC2, Connect, DynamoDB, Glue, Athena, Timestream, and Chinkle and other AWS Services in U.s.-Eastward-1. The root crusade of this issue is an damage of several network devices in the US-Eastward-1 Region. We are pursuing multiple mitigation paths in parallel, and have seen some signs of recovery, just we exercise not have an ETA for full recovery at this fourth dimension. Root logins for consoles in all AWS regions are affected by this issue, still customers can login to consoles other than U.s.-EAST-1 by using an IAM function for authentication.

[12:34 PM PST] Nosotros continue to experience increased API fault rates for multiple AWS Services in the US-E-1 Region. The root cause of this issue is an impairment of several network devices. Nosotros go on to work toward mitigation, and are actively working on a number of dissimilar mitigation and resolution actions. While we take observed some early signs of recovery, we do not have an ETA for full recovery. For customers experiencing issues signing-in to the AWS Management Console in US-East-ane, nosotros recommend retrying using a separate Management Console endpoint (such as https://the states-due west-2.console.aws.amazon.com/). Additionally, if you are attempting to login using root login credentials you may be unable to do so, fifty-fifty via console endpoints not in U.s.-EAST-i. If you are impacted by this, nosotros recommend using IAM Users or Roles for authentication. We will continue to provide updates here every bit nosotros take more data to share.

[2:04 PM PST] Nosotros have executed a mitigation which is showing significant recovery in the US-East-one Region. We are continuing to closely monitor the health of the network devices and nosotros expect to keep to make progress towards full recovery. We yet do not have an ETA for full recovery at this time.

[2:43 PM PST] Nosotros have mitigated the underlying issue that caused some network devices in the US-E-1 Region to be impaired. We are seeing improvement in availability beyond most AWS services. All services are now independently working through service-by-service recovery. We continue to work toward total recovery for all impacted AWS Services and API operations. In gild to expedite overall recovery, we have temporarily disabled Event Deliveries for Amazon EventBridge in the US-Eastward-one Region. These events will still be received & accepted, and queued for later commitment.

[3:03 PM PST] Many services accept already recovered, however we are working towards full recovery beyond services. Services like SSO, Connect, API Gateway, ECS/Fargate, and EventBridge are however experiencing impact. Engineers are actively working on resolving bear upon to these services.

This is not the beginning time this has happened. In July, AWS services were disrupted for effectually two hours, causing issues on Amazon stores worldwide.

In June, another internet outage, this time affecting the Fastly content delivery network (CDN) brought downwardly Amazon, Reddit, Twitch and huge numbers of other big sites.

We will continue updating this story equally it develops.

Source: https://www.tomsguide.com/news/major-amazon-outage-hits-alexa-disney-plus-ring-pubg-and-more

Posted by: sharpthicy1994.blogspot.com

0 Response to "Massive AWS outage mostly over, but some problems linger"

Post a Comment